Abstract

Human Causal Transfer:

Challenges for Deep Reinforcement Learning

Mark Edmonds1∗, James Kubricht2∗, Colin Summers3,4, Yixin Zhu5, Brandon Rothrock3, Song-Chun Zhu1,5, Hongjing Lu2,5

*equal contributor | 1 Department of Computer Science, UCLA | 2 Department of Pyschology, UCLA | 3 Jet Propulsion Laboratory, Caltech

4 Department of Computer Science, UW | 5 Department of Statistics, UCLA

Abstract

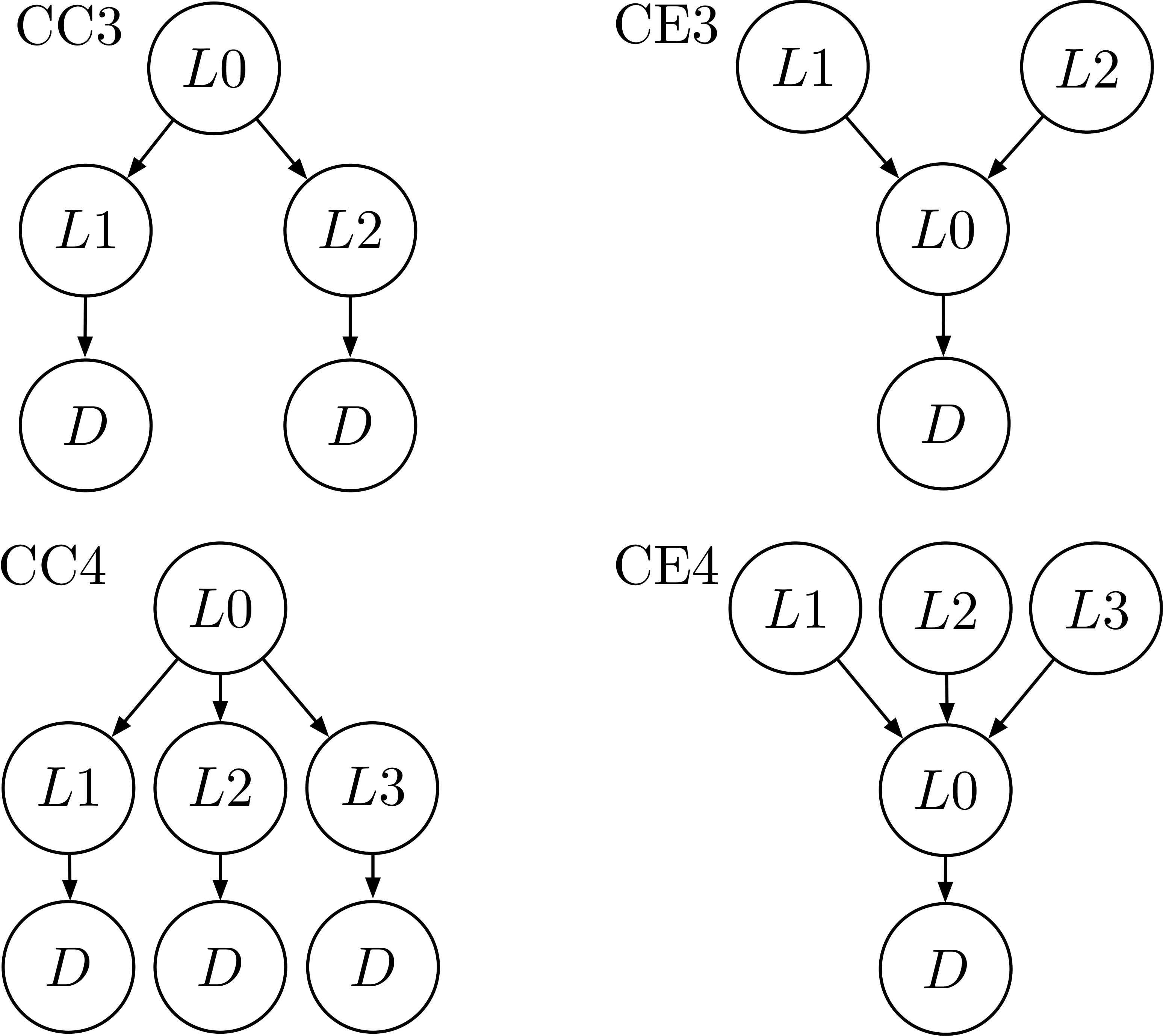

Discovery and application of causal knowledge in novel problem contexts is a prime example of human intelligence. As new information is obtained from the environment during interactions, people develop and refine causal schemas to establish a parsimonious explanation of underlying problem constraints. The aim of the current study is to systematically examine human ability to discover causal schemas by exploring the environment and transferring knowledge to new situations with greater or different structural complexity. We developed a novel OpenLock task, in which participants explored a virtual “escape room” environment by moving levers that served as “locks” to open a door. In each situation, the sequential movements of the levers that opened the door formed a branching causal sequence that began with either a common-cause (CC) or a common-effect (CE) structure. Participants in a baseline condition completed five trials with high structural complexity (i.e., four active levers). Those in the transfer conditions completed six training trials with low structural complexity (i.e., three active levers) before completing a high-complexity transfer trial. The causal schema acquired in the transfer condition was either congruent or incongruent with that in the transfer condition. Baseline performance under the CC schema was superior to performance under the CE schema, and schema congruency facilitated transfer performance when the congruent schema was the less difficult CC schema. We compared between-subjects human performance to a deep reinforcement learning model and found that a standard deep reinforcement learning model (DDQN) is unable to capture the causal abstraction presented between trials with the same causal schema and trials with a transfer of causal schema.